Enterprise Data Pipeline for SAP & Business Systems

Context

The organization relied on SAP ERP systems as a primary source of operational data, but analytics workflows were constrained by fragmented data access and slow reporting cycles.

Business teams depended on manual exports and static reports, which increased latency, reduced trust in data, and limited the ability to make timely decisions.

The objective was to design an automated, cloud-native data pipeline on AWS that could reliably ingest SAP data, scale with growing volumes, and enable analytics-ready consumption.

System Architecture

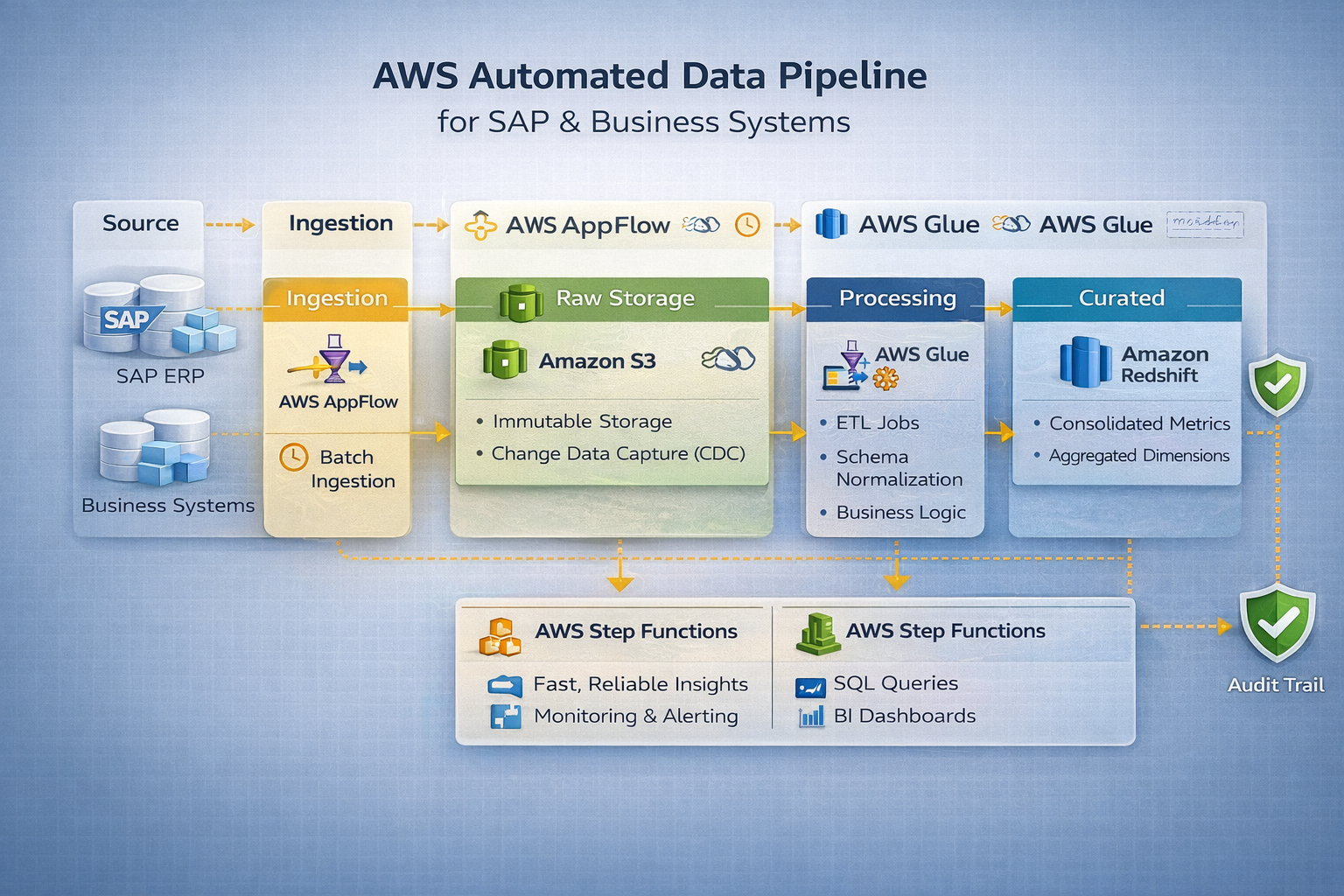

The platform follows a layered data architecture designed to maximize reliability, traceability, and scalability across heterogeneous enterprise systems.

- Source Systems: SAP modules (FI, MM, SD) alongside external business systems acting as authoritative data producers.

- Ingestion Layer: Scheduled batch extractors pull data from SAP and business systems, ensuring minimal impact on operational workloads.

- Raw / Staging Layer: Immutable storage in the data lake preserves source data exactly as received, enabling replay, auditing, and recovery.

- Curated Layer: Business logic, conformed dimensions, and standardized metrics are applied to create analytics-ready datasets.

- Serving Layer: Optimized tables exposed to BI tools and downstream consumers, supporting fast and consistent reporting.

- Orchestration & Monitoring: Centralized scheduling, dependency management, and pipeline observability ensure reliability and controlled execution.

Key Engineering Decisions

- AWS AppFlow was chosen to integrate SAP data due to its native support and managed connectivity, reducing operational overhead.

- Batch-based ingestion was preferred over streaming to align with SAP system constraints and reporting requirements.

- Amazon S3 was used as an immutable raw layer to ensure traceability, auditing, and recovery capabilities.

- AWS Glue was selected for ETL processing to enable scalable Spark-based transformations without infrastructure management.

- AWS Redshift served as the analytical warehouse to support complex queries and concurrent BI workloads.

Outcome

The automated data pipeline significantly reduced data processing time and eliminated manual reporting workflows.

Business stakeholders gained access to consistent, analytics-ready SAP data, improving decision-making speed and confidence across teams.

The solution established a scalable foundation for future analytics and cloud-based data initiatives.